Your competitors are shipping new ad creatives every single day. Manually tracking and analyzing these campaigns is impossible at scale. In this workflow teardown, we explore a production-ready **automated competitor ad analysis pipeline** that captures ad creatives, converts them to markdown using ocrskill, and leverages a secondary AI model to structure that text for downstream competitive intelligence. ## The Ad Analysis Automation Architecture A robust ad tracking automation pipeline consists of four distinct stages: 1. **Capture** - A headless browser scrapes ad libraries (e.g., Meta Ad Library, Google Ads Transparency Center). 2. **Transcribe** - ocrskill converts each visual ad creative into clean, readable markdown. 3. **Structure** - A separate Large Language Model (LLM) maps the extracted markdown into a strict JSON schema. 4. **Report** - Structured competitive data is pushed to BI dashboards and Slack alerts. ## Why OCR Matters for Competitor Ad Tracking Ad creatives are designed to be visually compelling to humans, not machine-readable for scrapers. Critical text is embedded in images, overlaid on videos, and styled in ways that easily defeat simple HTML scraping. ocrskill handles the complex **OCR (Optical Character Recognition) layer**, transforming those visuals into markdown that preserves both the readable text and the original layout cues. This distinction is crucial: ocrskill is not the system deciding what constitutes a headline, a Call-To-Action (CTA), a special offer, or legal disclaimers. It is purely the OCR data extraction step. The actual schema mapping and intelligence gathering happen afterward using a general-purpose AI model. ## Step 1: OCR Data Extraction to Markdown ```python import asyncio from openai import AsyncOpenAI ocr_client = AsyncOpenAI( base_url="https://api.ocrskill.com/v1", api_key="sk-your-key" ) async def image_to_markdown(image_url: str) -> str: response = await ocr_client.chat.completions.create( model="ocrskill-v1", messages=[{ "role": "user", "content": [ {"type": "text", "text": "Convert this ad creative to markdown. Preserve all visible text and basic structure."}, {"type": "image_url", "image_url": {"url": image_url}} ] }] ) return response.choices[0].message.content ``` The output from this initial step is raw markdown, not structured JSON. A typical result contains the main headline, supporting body text, offer language, and legal copy, exactly in the order it appears within the ad image. ## Step 2: Structuring AI Ad Intelligence with an LLM Once the ad creative is transcribed into markdown, a secondary AI model normalizes the text into structured fields for analysis. This is where you infer and extract specific competitive intelligence data points: - `brand_name` - `headline` - `cta_text` - `offer_details` - `fine_print` - `messaging_theme` ```python import json from openai import AsyncOpenAI llm_client = AsyncOpenAI(api_key="sk-your-openai-key") AD_SCHEMA = { "type": "object", "properties": { "brand_name": {"type": "string"}, "headline": {"type": "string"}, "cta_text": {"type": "string"}, "offer_details": {"type": "string"}, "fine_print": {"type": "string"}, "messaging_theme": {"type": "string"} }, "required": [ "brand_name", "headline", "cta_text", "offer_details", "fine_print", "messaging_theme" ], "additionalProperties": False } async def structure_ad_markdown(markdown: str) -> dict: response = await llm_client.responses.create( model="gpt-5-mini", input=[ { "role": "system", "content": ( "You convert OCR markdown from ad creatives into a strict JSON object. " "Do not invent facts that are not present in the markdown." ), }, { "role": "user", "content": f"Convert this ad creative markdown into JSON:\n\n{markdown}", }, ], text={ "format": { "type": "json_schema", "name": "ad_creative", "schema": AD_SCHEMA, "strict": True } } ) return json.loads(response.output_text) ``` ## Why Splitting OCR and Structuring Works Best Separating the OCR extraction from the data structuring phase makes your automated pipeline significantly more reliable: - **Accuracy:** ocrskill focuses solely on converting the image into highly accurate markdown. - **Flexibility:** The secondary LLM focuses on schema mapping and light semantic interpretation. - **Efficiency:** You can re-run the structuring step with updated schemas without needing to re-process the heavy image files. - **Quality Assurance:** You maintain a human-readable markdown record for easy QA and debugging. ## Scaling the Pipeline with Asynchronous Processing To handle large daily batches of competitor ads efficiently, the pipeline parallelizes both steps: first the OCR extraction, followed by schema normalization. ```python async def process_creative(image_url: str) -> dict: markdown = await image_to_markdown(image_url) return await structure_ad_markdown(markdown) async def process_batch(image_urls: list[str]) -> list[dict]: tasks = [process_creative(url) for url in image_urls] results = await asyncio.gather(*tasks, return_exceptions=True) return [item for item in results if not isinstance(item, Exception)] ``` ## The Competitive Analysis Layer Once the raw markdown is normalized into a consistent JSON schema, you can seamlessly aggregate and analyze your competitor's marketing strategies across multiple platforms: - **Messaging Trends:** What messaging themes and angles are competitors doubling down on? - **Conversion Tactics:** How are Call-to-Actions (CTAs) evolving month over month? - **Offer Strategy:** Which promotions and offers correlate with increased ad spend? ## Results and Business Impact Implementing this two-step architecture gives marketing and data teams a cleaner, more robust foundation for competitive intelligence: - OCR output remains entirely faithful to the original ad image. - Structured fields are generated transparently in a separate, isolated step. - New data extraction schemas can be introduced without altering or breaking the core OCR layer. - Analysts can easily inspect the intermediate markdown whenever the final JSON output requires verification. ## Key Takeaway for Ad Tracking Automation The right mental model for this workflow is not "ocrskill magically extracts structured ad data." A more accurate and scalable model is: **"ocrskill turns the visual creative into markdown, and another AI model structures that markdown for analysis."** This architectural separation makes your automated competitor ad analysis pipeline easier to debug, simpler to evolve, and aligns perfectly with how modern, real-world automation stacks are engineered.

Claude's [Skills](https://support.claude.com/en/articles/12512180-use-skills-in-claude) feature allows you to package reusable workflows that Claude AI can load on demand. If you frequently need to **extract text from images** - such as YouTube thumbnails, social media carousels, or complex infographics - writing the same OCR (Optical Character Recognition) prompts repeatedly is highly inefficient. In this comprehensive 2026 guide, we will build a custom **Claude OCR Skill** that leverages the [ocrskill API](https://ocrskill.com) to automatically extract text from visual content and format it into strictly typed JSON. By the end of this tutorial, you'll have a fully functional automated pipeline capable of processing three high-value visual formats: YouTube and social thumbnails, multi-slide social carousels, and data-heavy infographics. ## What Are Custom Claude Skills? Claude Skills are modular capability packages that extend what Claude AI can do. Each skill resides in a directory with a `SKILL.md` file containing metadata, instructions, and optional scripts or templates. Claude automatically discovers these skills based on their description and loads them only when relevant. This means you pay zero context token costs for installed skills until they are actually triggered. The architecture relies on [progressive disclosure](https://docs.claude.com/en/docs/agents-and-tools/agent-skills/overview): 1. **Level 1 - Metadata:** Always loaded. Contains just the skill name and description (~100 tokens). 2. **Level 2 - Instructions:** Loads when the skill is triggered. The body of `SKILL.md` enters the active context window. 3. **Level 3 - Resources:** Loaded on demand. Python scripts, prompt templates, and reference files are read from the filesystem as needed. This flexible architecture allows you to bundle a comprehensive **Claude OCR workflow** with multiple extraction scripts and reference schemas without bloating your everyday conversational context. ## Why Build a Dedicated Claude OCR Skill? If your workflow involves asking Claude to extract text from screenshots, YouTube thumbnails, or LinkedIn slide decks, you are likely rewriting the exact same extraction instructions every time. A dedicated Claude OCR skill solves this inefficiency by permanently encoding: - The optimal API call format for a high-accuracy OCR service like **ocrskill**. - Content-specific extraction prompts tailored for thumbnails, carousels, or infographics. - A secondary AI structuring step that precisely maps raw OCR markdown to a strict JSON schema. - Built-in error handling and retry logic within a bundled Python script. Once installed, you simply drop an image into a Claude chat and type "extract this." Your custom skill handles the entire image-to-text and structuring pipeline autonomously. ## Skill Directory Structure A well-organized directory is crucial for a maintainable skill. We'll use the following structure: ```text ocrskill-ocr/ ├── SKILL.md ├── scripts/ │ ├── extract.py │ ├── structure.py │ └── schemas.py ``` The `SKILL.md` file holds metadata and instructions. The `scripts/` directory contains the core logic: text extraction, JSON structuring, and a `schemas.py` module defining Pydantic models for each content type. Using Pydantic ensures runtime validation and provides automatic JSON Schema generation for the OpenAI Structured Outputs API. ## Step 1: Create the SKILL.md Configuration File Every skill begins with a `SKILL.md` file. The YAML frontmatter dictates when Claude should activate the skill, while the markdown body provides step-by-step execution instructions. ```text --- name: ocrskill-ocr description: Extract text from thumbnails, carousels, and infographics using the ocrskill API and structure the output as typed JSON. Use when the user provides an image and asks for OCR, text extraction, or content analysis of visual media. dependencies: openai, pydantic>=2.0 --- # ocrskill OCR Skill ## Overview This skill converts visual content into structured data using a two-step pipeline: 1. **Extract** - Send the image to the ocrskill API and receive clean markdown. 2. **Structure** - Pass the markdown to a secondary model that maps it to a rigid JSON schema. ## When to Use Activate this skill when the user: - Uploads a thumbnail, carousel slide, or infographic. - Asks to "extract," "transcribe," or "OCR" an image. - Requests structured data from a visual asset. ## Supported Content Types | Type | Description | Pydantic Model | |------|-------------|----------------| | thumbnail | YouTube/social video thumbnails with title text, channel names, view counts | `ThumbnailSchema` | | carousel | Multi-slide social media carousels (Instagram, LinkedIn) | `CarouselSchema` | | infographic | Data-heavy visuals with stats, charts, and callouts | `InfographicSchema` | ## Instructions 1. Determine the content type from the user's uploaded image. If unclear, ask for clarification. 2. Run `scripts/extract.py` with the image file to obtain raw markdown text. 3. Run `scripts/structure.py` using the markdown and the appropriate matching schema. 4. Return both the raw markdown and the structured JSON payload to the user. ## Environment Requires `openai` and `pydantic` Python packages, alongside a valid `OCRSKILL_API_KEY` environment variable. ``` *SEO Tip: The `description` field is the most critical line. Claude reads it at startup to determine skill relevance. Be highly specific - include keywords like "OCR," "extract text," "thumbnails," and "infographics" to ensure reliable matching.* ## Step 2: Define Pydantic Schemas for Structured Output Rather than managing raw JSON schema files, we define Pydantic models in a single `schemas.py` module. This approach provides robust runtime validation, automatic JSON Schema generation, and a superior developer experience with type checking. **scripts/schemas.py** ```python from pydantic import BaseModel class ThumbnailSchema(BaseModel): title: str channel_name: str view_count: int | None = None duration: str | None = None badge_text: str | None = None overlay_text: list[str] = [] class CarouselSchema(BaseModel): slide_number: int heading: str | None = None body_text: str cta_text: str | None = None hashtags: list[str] = [] mentions: list[str] = [] class InfographicStat(BaseModel): label: str value: str class InfographicSection(BaseModel): heading: str stats: list[InfographicStat] = [] body_text: str | None = None class InfographicSchema(BaseModel): title: str subtitle: str | None = None sections: list[InfographicSection] source_attribution: str | None = None SCHEMAS = { "thumbnail": ThumbnailSchema, "carousel": CarouselSchema, "infographic": InfographicSchema, } ``` Notice how the `InfographicSchema` is intentionally nested. Infographics often feature multiple sections containing distinct headings and statistics. A flat schema would lose this crucial visual hierarchy. Pydantic naturally models this nested structure, keeping the generated JSON schema perfectly in sync. ## Step 3: Build the OCR Image Text Extraction Script Our extraction script will call the ocrskill API utilizing the familiar OpenAI SDK format. It accepts an image path or URL and prints cleanly formatted markdown to stdout. ```python import sys import base64 import os from openai import OpenAI client = OpenAI( base_url="https://api.ocrskill.com/v1", api_key=os.environ["OCRSKILL_API_KEY"], ) PROMPTS = { "thumbnail": ( "Convert this video thumbnail to markdown. " "Preserve the title text, channel name, view count, duration, " "and any overlay text or badges exactly as they appear." ), "carousel": ( "Convert this carousel slide to markdown. " "Preserve the heading, body text, calls to action, hashtags, " "and any mentions exactly as they appear." ), "infographic": ( "Convert this infographic to markdown. " "Preserve the title, all section headings, every statistic with its label, " "body text, and source attribution exactly as they appear. " "Use markdown headings to reflect the visual hierarchy." ), } def extract(image_source: str, content_type: str) -> str: prompt = PROMPTS.get(content_type, PROMPTS["infographic"]) if image_source.startswith("http"): image_content = {"type": "image_url", "image_url": {"url": image_source}} else: with open(image_source, "rb") as f: b64 = base64.b64encode(f.read()).decode() ext = image_source.rsplit(".", 1)[-1].lower() mime = {"png": "image/png", "jpg": "image/jpeg", "jpeg": "image/jpeg", "webp": "image/webp", "gif": "image/gif"}.get(ext, "image/png") image_content = {"type": "image_url", "image_url": {"url": f"data:{mime};base64,{b64}"}} response = client.chat.completions.create( model="ocrskill-v1", messages=[{ "role": "user", "content": [ {"type": "text", "text": prompt}, image_content, ], }], ) return response.choices[0].message.content if __name__ == "__main__": image_path = sys.argv[1] ctype = sys.argv[2] if len(sys.argv) > 2 else "infographic" print(extract(image_path, ctype)) ``` **Why Content-Specific Prompts Matter:** A YouTube thumbnail prompt asking for "section headings and statistics" will confuse the OCR output. Tailoring prompts to the specific visual structure of each content type significantly improves text extraction accuracy. ## Step 4: Build the JSON Structuring Script The structuring script takes the raw markdown from step one and maps it to our Pydantic model using a secondary AI model. This separation of concerns is the exact two-step pattern utilized in our [automated competitor ad analysis pipeline](https://ocrskill.com/blog/automated-competitor-ad-analysis-pipeline) - ocrskill handles the high-fidelity **image-to-text conversion**, while a fast general-purpose LLM handles semantic structuring. By setting the OpenAI Structured Outputs API `response_format` to our Pydantic model's JSON schema, we guarantee clean, parsable data every time - eliminating the need for fragile `json.loads` try/except blocks. ```python import sys import json import os from openai import OpenAI from schemas import SCHEMAS llm_client = OpenAI(api_key=os.environ.get("OPENAI_API_KEY", "")) def structure(markdown: str, content_type: str) -> dict: schema_cls = SCHEMAS[content_type] json_schema = schema_cls.model_json_schema() response = llm_client.chat.completions.create( model="gpt-5-mini", messages=[ { "role": "system", "content": ( "You convert OCR markdown into a JSON object matching the provided schema. " "Use null for fields not present in the markdown. Do not invent data." ), }, { "role": "user", "content": f"OCR Markdown:\n{markdown}", }, ], response_format={ "type": "json_schema", "json_schema": { "name": content_type, "schema": json_schema, "strict": True, } }, ) # Validate the raw string response using Pydantic return schema_cls.model_validate_json(response.choices[0].message.content).model_dump() if __name__ == "__main__": markdown_input = sys.stdin.read() ctype = sys.argv[1] if len(sys.argv) > 1 else "infographic" result = structure(markdown_input, ctype) print(json.dumps(result, indent=2)) ``` *(Note: The code above uses the standard `chat.completions.create` format with `response_format` for structured outputs).* When Claude runs this skill, it automatically pipes the stdout of `extract.py` directly into the stdin of `structure.py`. This keeps each component of your pipeline isolated and independently testable. ## Step 5: Package and Install Your Claude OCR Skill To deploy, package the skill directory as a ZIP file and upload it directly to Claude. ```bash cd ocrskill-ocr/ zip -r ../ocrskill-ocr.zip . ``` Next, navigate to [Customize > Skills](https://claude.ai/customize/skills) within the Claude interface, click the "+" button, select "Upload a skill," and upload your newly created ZIP file. The skill will appear in your skill list, ready to be toggled on. *For Claude Code users:* You can simply drop the `ocrskill-ocr/` directory into `~/.claude/skills/` (for personal use) or `.claude/skills/` (for project-level access), and Claude will discover it automatically. ## How to Use the Claude OCR Skill Once successfully installed, the skill activates automatically whenever you provide an image and mention text extraction. Try these natural language prompts: - *"Extract the text from this YouTube thumbnail."* - *"OCR this LinkedIn carousel slide and give me structured JSON."* - *"Pull all the statistics and data from this infographic."* Claude will intelligently read the `SKILL.md`, determine the correct content type, execute the extraction script against the ocrskill API, and subsequently run the structuring script. You receive both the raw markdown transcript and the perfectly typed JSON payload. ### Batch Processing Multiple Images For batch processing, you can command Claude to iterate over an entire directory of images: > "I have 30 social media carousel slides in `/screenshots/campaign-q1/`. Extract and structure each one as a `carousel` type. Save the final results to a single JSON array." Claude will autonomously loop through the folder, invoke the skill's scripts for each image, and aggregate the extracted data. ## Handling Multi-Slide Social Media Carousels Social media carousels require special handling because a single cohesive post spans multiple separate images. Our skill processes each slide independently but returns an array of structured objects, preserving the critical slide order. ```python import asyncio from extract import extract from structure import structure async def process_carousel(slide_paths: list[str]) -> list[dict]: results = [] for i, path in enumerate(slide_paths, start=1): markdown = extract(path, "carousel") data = structure(markdown, "carousel") data["slide_number"] = i results.append(data) return results ``` If you require faster processing, you can wrap the extraction calls in `asyncio.to_thread` to execute them concurrently, bypassing the blocking nature of the synchronous OpenAI SDK. ## Pro Tips for Optimizing Your Claude OCR Skill - **Prompt Precision is Key:** The prompt engineering inside `extract.py` dictates your final quality. If the OCR frequently misses overlay text on thumbnails, refine the prompt: *"Include all text rendered on top of the image, paying special attention to small text in corners and subtle watermarks."* - **Schema Evolution is Cheap:** Because we decoupled OCR extraction from JSON structuring, you can add new fields to your Pydantic model without ever needing to re-process the original images. - **Test Independently:** Always run `extract.py` on a sample image and verify the raw markdown before attempting to debug the JSON structuring. Flawed extraction cannot be fixed by clever JSON schemas. - **Offload Heavy Lifting to ocrskill:** The ocrskill API runs on highly optimized Nvidia GPU infrastructure, returning precise text in under 500ms. Relying solely on standard multimodal LLM vision for text-dense infographics is significantly slower and highly prone to hallucination. ## Conclusion: Automating Image Text Extraction with Claude By building and installing this custom Claude Skill, you've created a permanent, highly reusable OCR pipeline that lives directly inside your AI assistant: - **Thumbnails:** Instantly parsed into titles, channel names, view counts, and overlays. - **Carousel Slides:** Accurately extracted into per-slide headings, body copy, and hashtags. - **Infographics:** Perfectly structured into hierarchical sections with accurately labeled data points. The raw markdown provides a verifiable audit trail, while the structured JSON is immediately ready to be ingested into dashboards, databases, or downstream automation workflows. Build it once, and never type out a manual OCR prompt again. The complete claude skill package is here: https://gitlab.com/ocrskill/claude-skill feel free to check it out ```bash git clone https://gitlab.com/ocrskill/claude-skill.git ocrskill-ocr cd ocrskill-ocr/ ls -l ```

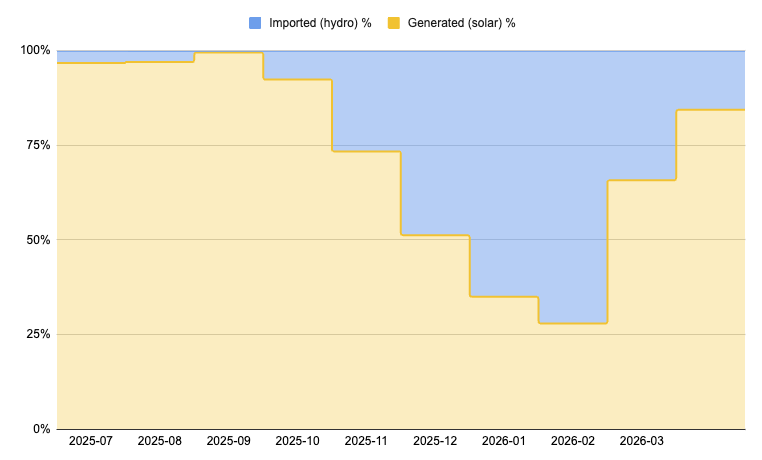

The AI revolution demands massive computational power. Every OCR API request, every image-to-markdown conversion, and every millisecond of AI vision inference draws significant electricity. At OCRskill, we believe that cutting-edge artificial intelligence shouldn't cost the Earth. That's why we've built our infrastructure on a foundation of 100% renewable energy - proving that sustainable AI inference isn't just a concept; it's the future of green computing and responsible AI. ## The Carbon Cost of AI Vision and OCR APIs Large AI vision models are incredibly power-hungry. A single OCR inference pipeline running on state-of-the-art NVIDIA hardware can consume massive amounts of electricity, especially when extracting text from thousands of images per hour for AI agents, content creators, and automated data entry workflows. When you multiply that energy usage across the millions of requests handled by modern OCR APIs, the environmental footprint becomes impossible to ignore. While many AI providers attempt to offset their carbon emissions by purchasing renewable energy certificates (RECs) from distant markets, OCRskill chose a more direct approach: local renewable energy generation and verified green grid imports. ## Our Renewable Energy Mix: March 2026 Snapshot As of March 2026, OCRskill's AI inference infrastructure operates on an industry-leading sustainable energy blend: - **84.41% generated locally from solar power** - Captured directly at our edge computing locations. - **15.59% imported from certified green-hydro** - Sourced exclusively from verified renewable hydroelectric facilities. This commitment to green computing isn't just a marketing claim. It's a measurable reality, tracked month by month, watt by watt, to ensure our OCR software remains truly carbon-neutral.  ## Seasonal Reality: The Solar Story Behind Our OCR API Solar power follows nature's rhythm. Our nine-month energy data tells a compelling story of sustainable adaptation for AI infrastructure: | Month | Generated (Solar) | Imported (Hydro) | |-------|-------------------|------------------| | 2025-06 | 96.75% | 3.25% | | 2025-07 | 97.02% | 2.98% | | 2025-08 | 99.57% | 0.43% | | 2025-09 | 92.42% | 7.58% | | 2025-10 | 73.42% | 26.58% | | 2025-11 | 51.24% | 48.76% | | 2025-12 | 34.97% | 65.03% | | 2026-01 | 27.89% | 72.11% | | 2026-02 | 65.77% | 34.23% | | 2026-03 | 84.41% | 15.59% | The summer months (June–August 2025) saw our solar generation peak at nearly 99.6%, with hydro imports dropping below 0.5%. As autumn arrived and days shortened, solar generation naturally declined, reaching its winter nadir in January 2026 at 27.89%. But here is the most important takeaway for sustainable AI: even in the darkest winter months, every single watt we imported to power our OCR API came from certified green-hydro sources. ## Why Hydroelectric Power is Solar's Ideal Partner Hydroelectric power is solar energy's perfect complement for green data centers. While solar fluctuates with daylight and seasons, hydroelectricity provides consistent baseload capacity from flowing water. By pairing local solar generation with certified green-hydro imports, we've achieved something rare in the AI infrastructure space: **genuine 100% renewable operation year-round**, without relying on the carbon shell game of conventional grid offsets. Our hydro imports aren't generic grid power with RECs attached. They are verified renewable energy generations from facilities that meet strict environmental standards - meaning no fossil fuel blending and no creative carbon accounting. ## Sustainable AI Inference: Faster AND Greener OCR There's a common misconception that eco-friendly computing requires a compromise in performance - that going green means slower AI inference, higher latency, or reduced capability. OCRskill proves otherwise. Our high-performance vision API delivers state-of-the-art OCR in milliseconds. Whether you need to convert thumbnails, extract text from infographics, process social media content, or transform complex documents into structured markdown, we provide industry-leading speed and accuracy. Best of all, we do it on hardware powered by the sun and flowing rivers, not by coal or natural gas. This matters immensely for AI agents and automated workflows. When your AI agent processes thousands of images through our OCR API, it's not just getting fast, accurate text extraction. It's making a definitively sustainable choice for the planet. ## The ESG Imperative for AI Infrastructure Environmental, Social, and Governance (ESG) criteria are no longer optional for technology companies. Enterprise customers increasingly demand supply chain transparency, and developers want to ensure their tools aren't accelerating climate change. By publishing our actual renewable energy generation data - rather than vague commitments or purchased offsets - we hold ourselves accountable. The data table above isn't cherry-picked; it shows real seasonal variation and acknowledges that winter months require more grid imports. This level of transparency is the foundation of genuine corporate sustainability claims. ## Building the Future of Green AI and OCR Our renewable energy strategy is just one pillar of OCRskill's commitment to sustainable AI: - **Edge-local inference** - Processing images closer to their source reduces data transmission energy. - **Hardware efficiency** - We utilize state-of-the-art NVIDIA GPUs that deliver maximum FLOPS per watt. - **Responsible sourcing** - 100% of our grid imports come from verified renewable hydroelectric facilities. - **Complete transparency** - We provide public energy reporting, free from greenwashing or marketing spin. Every millisecond of OCR you run through our API contributes to a growing proof point: AI image processing at scale can be both incredibly powerful and environmentally responsible. ## Conclusion: Make the Green Choice for Your AI Agents When you integrate OCRskill into your AI agent workflow, you're not just choosing a fast, accurate OCR API for image-to-markdown conversion. You are choosing sustainable AI inference. You are voting with your compute for a future where advanced AI vision doesn't come at the planet's expense. The next time your AI workflow extracts text from a thumbnail, parses an infographic, or converts a scanned document to markdown, remember: up to 99% of the power that made it possible came from the sun, and the rest came from flowing water. None of it came from fossil fuels. That is the OCRskill difference. State-of-the-art AI vision, with zero carbon guilt. --- *Want to make your AI workflows greener while maintaining top-tier performance? [Get your free OCR API key](/) and start extracting text sustainably today.* ## Frequently Asked Questions (FAQ) **Does using solar power affect OCR API performance, latency, or uptime?** Not at all. Our sustainable AI infrastructure is designed for enterprise-grade reliability. Solar panels feed into high-capacity battery storage and grid-tie systems that ensure perfectly consistent power delivery. The renewable source behind our electricity does not impact the speed, accuracy, or availability of our state-of-the-art OCR API. **How do you verify that imported hydro power is truly "green computing"?** We source our electricity exclusively from certified renewable hydroelectric facilities that meet strict environmental standards. These are not generic grid purchases combined with renewable energy credits (RECs); we contract directly with verified green generators to guarantee zero fossil fuel blending. **What happens to your OCR API during extended cloudy periods or at night?** Advanced battery storage handles short-term gaps. When solar generation drops for extended periods (as seen in our winter data), our infrastructure seamlessly draws from our certified green-hydro contracts. The result is uninterrupted, 24/7, 100% renewable operation for all your image-to-text needs. **Can enterprise customers request ESG sustainability reporting for their API usage?** Absolutely. Contact us for detailed environmental impact reports showing the exact renewable energy mix powering your specific OCR workloads. We are committed to transparency to help your organization meet its own ESG (Environmental, Social, and Governance) reporting requirements. **Is sustainable AI inference and green OCR more expensive?** Surprisingly, no. Generating our own local solar power, combined with highly efficient edge-located NVIDIA hardware, actually reduces our long-term operational costs. We pass those savings directly to our customers while delivering carbon-neutral OCR. Sustainability and affordability are built directly into our architecture.

The creator economy generates billions of visual content pieces daily. Brands, agencies, and platforms need to understand what is working, but much of the useful data is trapped inside screenshots, thumbnails, and overlay text. In this guide, we will build a simple two-step pipeline for short-form video analysis: 1. Use `ocrskill` to convert screenshots into Markdown. 2. Use a second AI model to turn that Markdown into structured JSON. That distinction matters. `ocrskill` is the OCR layer. It extracts the visible content accurately and quickly, but the schema design and data normalization happen in a separate model. ## The Data Problem Short-form video platforms display critical metrics visually: - View counts, likes, comments, shares - Creator handles and verification badges - Hashtags and captions burned into thumbnails - Branded content tags and sponsorship disclosures - Music/audio attribution text Some of this data is unavailable or unreliable via platform APIs. But it is visible in screenshots and thumbnails, which makes it a good fit for an OCR-first workflow. ## Capture Strategy We use a combination of: - **Platform APIs** (where available) for basic metadata - **Screenshot automation** for visual metrics not exposed via API - **Thumbnail extraction** for video preview analysis ```python from playwright import async_api as playwright async def capture_reel_screenshot(url: str, output_path: str): async with playwright.async_playwright() as p: browser = await p.chromium.launch() page = await browser.new_page(viewport={"width": 390, "height": 844}) await page.goto(url, wait_until="networkidle") await page.screenshot(path=output_path, full_page=False) await browser.close() ``` ## Step 1: Extract the Screenshot to Markdown Once screenshots are captured, send them to `ocrskill` and ask for a faithful Markdown transcription of everything visible in the image. ```python from openai import OpenAI ocr_client = OpenAI( base_url="https://api.ocrskill.com/v1", api_key="sk-your-key" ) def extract_screenshot_markdown(image_path: str) -> str: import base64 with open(image_path, "rb") as f: b64 = base64.b64encode(f.read()).decode() response = ocr_client.chat.completions.create( model="ocrskill-v1", messages=[{ "role": "user", "content": [ {"type": "text", "text": """Convert this social media screenshot into clean Markdown. Preserve: - visible text - counts and labels - usernames and captions - hashtags and mentions - sponsorship disclosures - music attribution Do not guess missing values. If something is unclear, mark it as uncertain in the Markdown."""}, {"type": "image_url", "image_url": {"url": f"data:image/png;base64,{b64}"}} ] }] ) return response.choices[0].message.content ``` The key idea is to keep the OCR step honest. You first extract what is actually visible, in a readable format, before asking another model to interpret or normalize it. ## Step 2: Use Another AI Model to Structure the Data After OCR, pass the Markdown into a general-purpose LLM that is good at schema following. Here we use `gpt-5-mini`, but the same pattern also works with other structured-output-capable models. ```python from openai import OpenAI import json llm_client = OpenAI(api_key="sk-your-openai-key") def structure_reel_metrics(markdown: str) -> dict: response = llm_client.chat.completions.create( model="gpt-5-mini", temperature=0, response_format={"type": "json_object"}, messages=[{ "role": "user", "content": f"""You are given OCR output from a social media screenshot. OCR Markdown: {markdown} Extract the following fields into JSON: - creator_handle: string | null - verified: boolean | null - view_count: integer | null - like_count: integer | null - comment_count: integer | null - share_count: integer | null - caption_text: string | null - hashtags: string[] - is_sponsored: boolean | null - music_attribution: string | null Rules: - Use null when the OCR does not clearly support a value. - Normalize numeric abbreviations like 2.4M or 18.2K into integers. - Do not invent fields that are not present. - Return only valid JSON.""" }] ) return json.loads(response.choices[0].message.content) ``` This separation gives you a more reliable system: - `ocrskill` focuses on extracting the visible content from the image. - The second model focuses on normalization, typing, and schema enforcement. - You can swap the second model later without changing your OCR layer. ## Building the Dataset Process thousands of screenshots into a structured DataFrame: ```python import pandas as pd from pathlib import Path screenshots = list(Path("./captures").glob("*.png")) records = [] for screenshot in screenshots: markdown = extract_screenshot_markdown(str(screenshot)) structured = structure_reel_metrics(markdown) structured["source_file"] = screenshot.name structured["ocr_markdown"] = markdown records.append(structured) df = pd.DataFrame(records) df.to_parquet("creator_metrics.parquet") ``` Keeping both the raw OCR Markdown and the structured JSON is useful for auditing. If a downstream metric looks wrong, you can inspect the OCR output that the structuring model received. ## Analysis Examples With structured data, you can now answer questions like: - Which creators have the highest engagement rate in the fitness niche? - What posting times correlate with maximum views? - How does sponsored content performance compare to organic? - What hashtag combinations drive the most shares? ## Why This Architecture Works Better Trying to force OCR and schema reasoning into a single step can make debugging harder. A two-stage pipeline is easier to trust and improve: - When OCR is wrong, you can fix the extraction prompt or improve screenshot quality. - When the JSON is wrong, you can refine the second model's schema prompt. - You can test each stage independently. - You can re-run the structuring step later without reprocessing the image. For production systems, this usually leads to better observability and cleaner failure handling. ## Practical Extensions Once the pipeline is working, you can extend it with: - **Trend classification:** classify the reel by niche, format, or call to action. - **Brand mention detection:** flag competitor names or campaign disclosures. - **Confidence review queues:** send uncertain OCR outputs to human review. - **Embedding and search:** index the OCR Markdown for retrieval and analytics. ## Conclusion The creator economy is a visual-first ecosystem, and many of the most useful signals never arrive in a clean API response. A practical approach is to let `ocrskill` do what it does best, convert images into high-quality Markdown, and then let a second AI model transform that OCR output into structured data. That gives you a pipeline that is easier to audit, easier to improve, and much closer to how production OCR systems are actually built.

The OpenAI-compatible OCR API is powerful, but sometimes you don't want to build a complex chat completion payload just to extract text from a single image. Our new **simplified OCR API endpoint** removes that boilerplate setup. You can now upload an image directly using `curl --data-binary` and instantly convert the image to markdown text. If your application requires a structured OCR response, this same endpoint seamlessly accepts a Mistral-style JSON payload featuring a `document_url` data URI. ## How the Simplified OCR API Works The new dedicated text extraction endpoint is: - `POST https://api.ocrskill.com/ocr` This endpoint automatically handles the tedious parts of image-to-text processing for you: - Base64 encoding for raw image uploads - Constructing OpenAI-style multimodal payloads - Forwarding the request to the underlying OCR model - Returning clean markdown directly for the simplest use cases This means you no longer need to manually assemble: - A `messages` array - An `image_url` content block - A formatted `data:image/...;base64,...` string ...unless you specifically need the standard OpenAI-compatible route for a broader AI integration. ## The Fastest Way to Convert Image to Markdown via cURL This is now the fastest, most straightforward way to OCR a local image file: ```bash export API_KEY="sk-your-key-here" curl https://api.ocrskill.com/ocr \ -H "Authorization: Bearer $API_KEY" \ -H "Content-Type: image/png" \ --data-binary "@example.png" ``` The response body returns plain, structured markdown text: ```md # Receipt Store Name 123 Main St Total: $18.42 ``` If you want to save the extracted OCR output directly to a file: ```bash curl https://api.ocrskill.com/ocr \ -H "Authorization: Bearer $API_KEY" \ -H "Content-Type: image/png" \ --data-binary "@example.png" > output.md ``` > **Pro Tip**: Always use `--data-binary`, not `-d`. The `-d` flag is designed for form-style request bodies and can corrupt binary image uploads during text extraction. Alternatively, you can submit the image as a form/multipart request: ```bash curl https://api.ocrskill.com/ocr \ -H "Authorization: Bearer $API_KEY" \ -F "image=@receipt.jpg" ``` ## Mistral-Compatible JSON OCR Response If your application is already configured to send the **Mistral OCR request format**, you can maintain that input structure and let ocrskill handle the heavy lifting behind the scenes. ```bash curl https://api.ocrskill.com/ocr \ -H "Authorization: Bearer $API_KEY" \ -H "Content-Type: application/json" \ -d '{ "type": "document_url", "document_url": "data:image/jpeg;base64,/9j/4AAQSkZJRgABAQ..." }' ``` In this structured mode, the response is JSON, matching the format of a standard Mistral OCR result: ```json { "pages": [ { "index": 0, "markdown": "# Receipt\n\nStore Name\n123 Main St\n\nTotal: $18.42", "images": [], "dimensions": { "dpi": 200, "height": 0, "width": 0 } } ], "model": "LightOnOCR-2-1B", "document_annotation": null, "usage_info": { "pages_processed": 1, "doc_size_bytes": 123456 } } ``` ## Choosing the Right OCR Endpoint for Your App **Use the simplified OCR endpoint (`/ocr`) when:** - You want the shortest possible `curl` command. - You are OCRing a local screenshot, scan, receipt, or photo. - You just want raw markdown text returned immediately. **Use the OpenAI-compatible endpoint (`/chat/completions`) when:** - You are integrating directly with the official OpenAI SDK. - You want to maintain a standard chat completions workflow. - You are already constructing complex multimodal messages in your application. **Use the Mistral-style JSON mode on `/ocr` when:** - Your client already emits standard `document_url` payloads. - You require a structured JSON OCR response instead of plain markdown text. ## Get Your Free OCR API Key You can generate a free, limited API key instantly to test the service (returned as JSON): ```bash curl https://api.ocrskill.com/get-key.json ``` For a plain-text key, use: ```bash curl https://api.ocrskill.com/get-key ``` ## Conclusion Our simplified OCR API is purpose-built for the most common text extraction workflow: **upload one image, get markdown back**. It eliminates multimodal request boilerplate while keeping the OpenAI-compatible API available for more advanced, multi-step AI integrations. If you want the fastest possible start, begin with `POST /ocr` and `--data-binary`. When your application scales into a larger multimodal workflow, the robust OpenAI-compatible `chat/completions` route is always there for you.

Social media is overwhelmingly visual. Carousels, reels, stories, and infographics carry the most valuable signals - but they're locked inside pixels. Traditional text-based monitoring tools miss all of it. In this tutorial, we'll build an autonomous agent that watches social media feeds and extracts text and structured data from every visual asset using ocrskill. The entire pipeline is just a clean function and a loop - no abstractions, no bloat, completely without LangChain. ## Prerequisites - Python 3.10+ - An ocrskill API key ([get one free](https://ocrskill.com/#pricing)) - The OpenAI SDK (`pip install openai`) - LangChain does not need to be installed, at all, really! That's it. No agent frameworks required. ## Step 1: Set Up the ocrskill Client Since ocrskill uses the OpenAI REST API format, setup is trivial: ```python from openai import OpenAI client = OpenAI( base_url="https://api.ocrskill.com/v1", api_key="sk-your-ocrskill-key" ) ``` ## Step 2: Create the Vision Function We define a simple function that accepts an image URL and returns extracted text. No decorators, no framework boilerplate: ```python def extract_text_from_image(image_url: str) -> str: """Extract all text and structured data from a social media image.""" response = client.chat.completions.create( model="ocrskill-v1", messages=[{ "role": "user", "content": [ {"type": "text", "text": "Extract all text, hashtags, mentions, and key visual elements."}, {"type": "image_url", "image_url": {"url": image_url}} ] }] ) return response.choices[0].message.content ``` Clean, readable, and works without LangChain. ## Step 3: Build the Analysis Layer For the LLM analysis, we use the OpenAI SDK directly. No need to wrap it in an "agent" abstraction: ```python from openai import OpenAI openai_client = OpenAI(api_key="sk-your-openai-key") def analyze_content(extracted_text: str, image_url: str) -> str: """Analyze extracted content and summarize key messages.""" response = openai_client.chat.completions.create( model="gpt-5-mini", temperature=0, messages=[{ "role": "user", "content": f"""Analyze this social media content and summarize the key message: Image URL: {image_url} Extracted content: {extracted_text} Provide a concise summary of the main message, tone, and any calls to action.""" }] ) return response.choices[0].message.content ``` Direct API calls. Fully transparent. No hidden prompt templates, no mystery "agent" logic - just your code, working without LangChain. ## Step 4: Process a Feed Here's where the magic happens. Notice what we're doing: iterating over images and calling functions. That's it. No framework required: ```python feed_images = [ "https://example.com/carousel-slide-1.jpg", "https://example.com/story-screenshot.png", "https://example.com/infographic.jpg", ] for img in feed_images: # Extract text using ocrskill extracted = extract_text_from_image(img) # Analyze with gpt-5-mini analysis = analyze_content(extracted, img) print(f"\n=== {img} ===") print(analysis) ``` A straightforward for loop. No `initialize_agent`, no `AgentType.OPENAI_FUNCTIONS`, no verbose logging noise. Just Python, doing what Python does best - without LangChain. ## What About "Agents"? If you need multi-step reasoning, you can add it explicitly: ```python def monitor_feed(images: list[str]) -> list[dict]: """Monitor a feed and return structured analysis.""" results = [] for img in images: extracted = extract_text_from_image(img) analysis = analyze_content(extracted, img) # Add conditional logic - your logic, visible and explicit if "competitor" in analysis.lower(): send_alert(f"Competitor mention detected: {img}") results.append({ "image": img, "extracted": extracted, "analysis": analysis }) return results ``` Your control flow is right there in the code. No black-box "agent" deciding when to call what tool. You see exactly what happens, when it happens - without LangChain. ## Performance Notes With ocrskill's Nvidia GPU-backed inference, each image processes in under 500ms. For a monitoring agent scanning hundreds of posts per minute, this latency is the difference between real-time intelligence and stale data. The leaner your code, the faster it runs. Removing framework overhead means fewer imports, less memory, and clearer stack traces when you need to debug. ## What's Next - Add sentiment analysis on extracted text (just another function call) - Store results in a vector database for RAG (plain HTTP requests) - Set up alerts for competitor mentions or trending topics (your own if-statements) The combination of ocrskill's speed and clean, direct code makes this stack ideal for production-grade social media intelligence. Sometimes the best framework is no framework - without LangChain, the solution is lighter, faster, and easier to maintain.

Most OCR pipelines have an awkward second half. You get clean text out of an image, and then you write brittle regexes, hand-tuned prompts, and parsing glue to turn that text into the handful of values your application actually needs. The OCR was the easy part. The structuring was the tax. Today that tax is gone. OCRskill now extracts **structured JSON directly from images** in a single request. You name the fields you want, and the API returns clean, typed values - dates normalized to `YYYY-MM-DD`, strings trimmed, placeholders rejected - ready to drop straight into a database. ## Name Fields, Get JSON The new endpoint is `POST /ocr.json`. Add a `fields` query parameter listing what you need, upload an image, and you're done: ```bash curl "https://api.ocrskill.com/ocr.json?fields=last_name,first_name,nationality,birthdate" \ -H "Authorization: Bearer sk-your-key-here" \ -F "file=@id-card.png" ``` ```json { "last_name": "MUSTERMANN", "first_name": "ERIKA", "nationality": "DEUTSCH", "birthdate": "1964-08-12" } ``` Under the hood, OCRskill reads the image, extracts the text, and maps it onto a typed schema built from exactly the fields you requested. You skip the chat-completion boilerplate, the schema wrangling, and the post-processing. One request in, structured data out. ## Why This Matters The point of structured extraction is that the shape of your output is **predictable**. Required fields are guaranteed present or the request fails loudly with a `400` - no silently empty columns three weeks later. Optional fields (just append `?` to the name) are omitted cleanly when they aren't in the document. Dates come back in ISO format every time. That predictability is what lets you wire OCR straight into a pipeline instead of babysitting it. ## Use Cases ### Identity documents Pull `last_name`, `first_name`, `birthdate`, `nationality`, `cnp`, `id_card_series_number`, `expiration_date`, and more straight from a photo of an ID card. Onboarding and KYC flows that used to need a human in the loop become a single API call. ### Invoices and receipts Extract `company_name`, `invoice_date`, or `receipt_date` from a scan or a phone photo. Feed accounts-payable automation without a template-matching step per vendor. ### Vehicle registration documents OCRskill understands the standardized A-Z fields of vehicle registration certificates: `license_plate`, `vehicle_identification_number`, `vehicle_brand`, `vehicle_model`, `engine_power`, `first_registration_date`, `owner_full_address`, and the rest. Fleet, insurance, and leasing workflows get a turnkey parser. ### Articles and written content Turn a screenshot of an article into `title`, `sub_title`, `article_abstract`, `publish_date`, and `tags` - or ask for `concise_summary` and let the model write one when the source doesn't include it. Great for content ingestion and RAG indexing. ### Event flyers and posters Lift `event_name`, `event_venue`, `event_dates`, and a normalized `event_date` out of a poster image to populate a calendar or listings feed automatically. ### Video thumbnail intelligence For creator-economy analytics, extract `thumbnail_hook_text`, `video_title`, `video_channel`, `video_length_str`, and `date_posted_str` from a thumbnail or screenshot. Build datasets of what hooks and titles actually perform, at scale. ## Discovery Mode Not sure which fields a document contains? Drop the `fields` parameter entirely and OCRskill runs in discovery mode - it considers every supported field optional, extracts whatever is meaningfully present, and returns only the values it actually found: ```bash curl "https://api.ocrskill.com/ocr.json" \ -H "Authorization: Bearer sk-your-key-here" \ -F "file=@flyer.jpg" ``` It's a fast way to explore a new document type before you lock in an exact schema. ## Same Inputs You Already Use `/ocr.json` accepts the same image inputs as the plain-markdown `/ocr` endpoint: multipart form uploads (`-F "file=@image.png"`), raw binary (`--data-binary`), and Mistral-style JSON bodies with a `document_url` data URI. PNG, JPEG, WebP, and GIF are all supported. If you ever want readable text instead of structured fields, the same `/ocr` endpoint still returns clean Markdown. ## Read the Full Reference The complete list of supported fields, all request formats, the `fields` syntax for required vs. optional values, and the error-handling details are documented in the [Structured OCR JSON API reference](/ocr-json-api). [Grab a free API key](https://api.ocrskill.com/get-key.json) and turn your first image into typed JSON in a single call. ## Frequently Asked Questions ### What is structured data, and why does it matter for OCR? Structured data is information shaped into named, typed fields rather than loose prose. For OCR it matters because a wall of recognized text still needs parsing, whereas structured data is ready to store and query immediately. OCRskill returns structured data as a clean JSON format keyed by the field names you request. ### How is this different from Google structured data or schema structured data markup? It's a different use of the phrase. Google structured data (schema.org structured data markup) is JSON-LD you add to a web page so search engines understand its content. The structured data here is the extracted output of a document image. If you searched for "google structured data" hoping to mark up a page, that's a separate topic; this feature is about turning images into records. ### Is there a JSON schema, and what JSON format do I get? Yes - the output follows a JSON schema generated from the fields you ask for, so the JSON format is predictable on every call. Dates come back as `YYYY-MM-DD` and strings are trimmed. ### Can I do PDF to JSON, PDF to image, or image to PDF? OCRskill processes images directly. For PDF to JSON, do a PDF to image conversion first (one image per page) and send those to `/ocr.json`. We don't ship an image to PDF or PDF to image tool - handle that conversion in your own pipeline, then let OCRskill do the structured extraction. ### What about plain image to text, or turning an image to prompt? For straight image to text, the `/ocr` endpoint returns Markdown. A popular image to prompt workflow is to OCR an image to Markdown and feed it to an LLM - but if you only need specific values, structured extraction skips that step. ### My image text extraction failed - how do I do a cause analysis? A failed extraction returns a `400` describing the cause: a required field missing from the document, an unknown or duplicate field name, or an unsupported image. The error includes the OCR'd `input_text` so your cause analysis can see exactly what was read before structuring failed. Marking shaky fields optional with `name?` usually resolves it. ### Does Claude have OCR capabilities? Can I use Claude for OCR? Claude has some OCR capabilities, and there's even a Claude Code OCR skill for agentic use. But for accurate, high-volume structured extraction, pairing Claude for OCR orchestration with a dedicated endpoint like OCRskill gives you reliable image text extraction plus typed JSON - the Claude OCR capabilities handle reasoning, OCRskill handles the pixels. For the full endpoint and field reference, see the [Structured OCR JSON API docs](/ocr-json-api).

The email arrived on a Tuesday morning. A logistics company had been trying for weeks to extract data from a set of delivery receipts. These weren't ordinary documents - they were photographs taken in dimly lit warehouses, often at odd angles, with crumpled paper surfaces that created shadows deep enough to swallow text whole. Every Optical Character Recognition (OCR) system they tried failed in the same way. The shadows became black holes, and the text at the edges of those shadows disappeared entirely. What should have been a straightforward automated data extraction task became a manual nightmare costing them hundreds of hours each month. They sent us one particularly challenging example. The receipt was technically legible to human eyes, but only just barely. The paper had folded in the center, creating a canyon of darkness where crucial pricing information sat. The photograph had been taken from above at a slight angle, meaning the text wasn't perfectly aligned. And somehow, the combination of overhead warehouse lighting and the phone's flash had created competing shadows that made the image look almost artistic - but functionally broken for traditional OCR extraction. ## The Anatomy of a Challenging Document Image Before diving into OCR solutions, we needed to understand exactly what made this image so difficult. Breaking it down revealed four distinct problems that often appear together in real-world document photography: - **Shadow interference** was the most obvious issue. When a document isn't perfectly flat against a contrasting background, any light source from above creates gradients of darkness. The human eye adjusts naturally to these variations, but OCR software does not. Areas that appear merely dim to a human become completely illegible to basic data extraction tools. - **Foreground confusion** compounded the problem. In this image, the receipt wasn't isolated. Behind it, warehouse shelving and equipment created visual clutter. The edges of the receipt blended into the background. Distinguishing what was part of the document versus what was environmental noise required something more sophisticated than standard edge detection. - **Angle and perspective distortion** added another layer of complexity. The photographer had held the phone slightly above the document, pointing downward. This created a subtle trapezoid effect where the top of the receipt appeared narrower than the bottom. Standard grid-based OCR approaches assume flat, frontal alignment. This assumption failed here. - **Texture interference** from the crumpled paper itself created micro-shadows within the document. The creases caught light differently than flat surfaces. What looked like text to the human eye looked like visual noise to simpler image analysis methods. ## Why Traditional OCR Fails: Our Research Path Our first attempts followed conventional document processing wisdom. We tried adjusting contrast. We applied different lighting normalization techniques. We created custom filters designed specifically for shadow recovery in document images. Each approach helped with one problem while making others worse. Increasing contrast to bring out text in shadowed areas also amplified the background clutter. Reducing the impact of that background dimmed the already-faint text we were trying to save. It became clear that any OCR solution treating the entire image uniformly would fail because different regions required different handling. The breakthrough came from considering how human perception actually works. When you look at that warehouse receipt, you don't analyze every pixel equally. Your eye is drawn to what appears closest and most prominent. The receipt, despite its flaws, occupies the visual foreground. The shelving behind it recedes into background context. You focus attention naturally on what matters. This observation led to a fundamental question: could we replicate this perceptual prioritization computationally? Could we identify which parts of an image represent foreground objects versus background environment, then use that understanding to guide how our OCR engine processes the document? ## A Depth-Aware Approach to Document Image Processing The research direction shifted toward depth estimation - not the physical measurement of distance, but the perceptual understanding of what appears nearer versus farther in a two-dimensional image. This isn't about knowing the receipt is twelve inches from the camera while the shelf is ten feet away. It's about recognizing that the receipt visually dominates the frame while the environment recedes.  Developing this capability required understanding how depth cues manifest in photography. Objects that appear closer tend to have sharper edges, more detailed textures, and occupy more central or prominent positions in the frame. Objects farther away become softer, less detailed, and often appear at the periphery. For document photography specifically, this perceptual depth analysis reveals something crucial: the paper surface, despite its flaws, presents as the clear foreground element. The shadows on its surface are part of that foreground. The warehouse behind it is the background. This distinction matters because it allows for targeted image processing before OCR extraction begins. Once foreground identification succeeds, the processing strategy changes completely. Instead of applying uniform adjustments across the entire image, we can amplify the foreground while suppressing background interference. The receipt becomes brighter and more prominent. The distracting environment fades. The shadows on the paper surface remain, but now they exist within a properly exposed document rather than being one variable among many competing for attention. ## Document Segmentation for Improved OCR Accuracy With the foreground properly emphasized, the next challenge becomes isolation. Even a well-exposed image containing multiple documents, or a document surrounded by clutter, presents data extraction difficulties. The goal shifts from "make the document visible" to "separate the document from everything else." This is where visual understanding meets practical OCR extraction. Modern Computer Vision techniques can identify boundaries and shapes with remarkable precision, but they work best when given clean, well-prepared input. The amplification step creates exactly this preparation. By making foreground objects stand out distinctly from their surroundings, it provides the ideal conditions for document boundary detection to succeed. The segmentation process identifies the precise edges of the document. It distinguishes the receipt from the table beneath it, the hand holding it, or the background environment. This boundary information becomes a mask - a digital stencil that says "process everything inside this shape, ignore everything outside." Critically, this mask is applied back to the original image, not to the amplified version. The amplification served its purpose as preparation for detection. Now that we know exactly where the document is, we want the actual content from the original source. The shadows, the texture, the slight angle - all of these remain, but now they're contained within an isolated region rather than competing with environmental noise, resulting in significantly higher OCR accuracy. ## A Production OCR Pipeline for Real-World Documents What began as a research project to solve one impossible image has become a robust approach for handling challenging document photography at scale. The Intelligent Document Processing (IDP) workflow now follows a consistent three-phase structure: 1. **Perceptual Preparation**: Analyzes the image to understand foreground versus background relationships. This isn't about changing the image yet - it's about building a map of what matters and what doesn't. 2. **Selective Amplification**: Uses that map to create a version of the image where foreground objects are visually emphasized. Background elements are suppressed through nuanced adjustment that respects the natural visual hierarchy humans perceive instinctively. 3. **Targeted Segmentation**: Identifies precise boundaries within this prepared environment, isolating the specific document or region of interest. This boundary information then enables focused OCR extraction from the original source, preserving authentic detail while eliminating environmental interference. For that logistics company, this approach transformed their impossible receipts into reliably extractable documents. The shadows that once swallowed pricing information became manageable variations within a properly isolated document region. The warehouse clutter that confused previous OCR engines became irrelevant background, cleanly separated from the data that mattered. ## Key Lessons for Intelligent Document Processing (IDP) The most important lesson from this research journey is that document extraction cannot rely on idealized assumptions. Real-world images arrive with problems - shadows, angles, clutter, and imperfect lighting. OCR systems that expect pristine scans or perfectly flat photographs will fail when reality intrudes. The second lesson is that image preparation matters more than raw processing power. Feeding a challenging image directly into OCR tools, no matter how sophisticated, yields poor results. Taking time to understand the visual structure of the image - to distinguish foreground from background, to identify what deserves attention versus what should be ignored - creates the conditions for successful extraction. The third lesson is that human perception offers a valuable blueprint. We don't read documents by analyzing pixels uniformly. We focus on what appears prominent and relevant. Building AI systems that mimic this perceptual prioritization, using depth and prominence cues to guide processing, aligns technical capabilities with how we naturally interact with visual information. ## FAQ: Preparing Images for Better OCR Results **Q: My document has heavy shadows. Should I try to remove them before sending the image for OCR?** Shadow removal is difficult to do manually without losing information. If you have control over the photography environment, the best solution is prevention - use even, diffused lighting and keep the document flat against a contrasting background. If the image already exists with shadows intact, modern OCR APIs can now handle these variations through perceptual analysis rather than requiring manual cleanup. **Q: What's the ideal camera angle for photographing documents?** Direct overhead shots, with the camera parallel to the document surface, produce the best OCR results. This minimizes perspective distortion and ensures even lighting across the page. If you must photograph at an angle due to physical constraints, try to keep the angle shallow - thirty degrees or less from parallel - and position the camera so the document fills most of the frame. **Q: Should I crop the image to just the document before processing?** If you can cleanly crop without cutting off content, this can help. However, aggressive cropping that removes the context around a document can actually make boundary detection harder, since OCR systems use surrounding contrast to identify where documents end. A better approach is to frame the document properly during capture, leaving a small margin of contrasting background visible. **Q: Does image resolution matter for OCR accuracy?** Yes, but with diminishing returns. For standard printed documents, a resolution that captures text clearly is sufficient - typically anything above 150 DPI equivalent is adequate. Extremely high resolutions create larger files without improving data extraction quality, since the limiting factor becomes the physical characteristics of the text itself rather than pixel count. **Q: What about color versus black and white scans?** Color preservation is generally preferable. While converting to grayscale reduces file size, it can also eliminate valuable visual cues that help distinguish foreground from background. Color information helps identify paper surfaces, separate text from colored backgrounds, and maintain the natural contrast that aids document detection. Only convert to grayscale if file size constraints absolutely require it. **Q: How do I handle multiple documents in one image?** When possible, photograph documents individually. Overlapping documents create complex visual situations where shadows and edges interact in unpredictable ways. If you must capture multiple documents together, arrange them flat against a contrasting surface with clear gaps between them. Avoid stacking or overlapping, which creates the kind of shadow interference that challenges data extraction systems. **Q: What file format should I use for OCR?** Lossless formats like PNG preserve all visual information, which is valuable for challenging documents. JPEG is acceptable if the compression quality is high - avoid aggressive compression that introduces artifacts around text edges. For most purposes, modern OCR systems handle either format equally well, so use whichever fits your workflow while maintaining image quality. **Q: Can damaged or wrinkled documents be successfully processed?** Physical damage presents genuine challenges, but they're not insurmountable. The key is capturing the damage clearly rather than trying to hide it. A well-lit photograph showing a crease honestly is better than a poorly lit attempt to minimize it. Advanced perceptual analysis techniques can often distinguish between document texture and actual content, treating creases and folds as surface characteristics rather than confusing them with text. ## Conclusion: Overcoming OCR Failures in Real-World Scenarios The warehouse receipt that started this research journey seemed impossible at first glance. It violated every assumption about clean document photography. Yet by stepping back and asking how human perception handles the same challenges, we found a path forward. The resulting approach - perceptual preparation, selective amplification, and targeted segmentation - doesn't just solve one difficult image. It creates a general framework for handling the messy reality of real-world document photography. Shadows, angles, and clutter become manageable variables rather than insurmountable OCR obstacles. For organizations dealing with imperfect document sources, this represents a shift in what's possible with Intelligent Document Processing. The logistics company that sent us that first challenging image now processes thousands of warehouse receipts automatically. The shadows that once demanded manual intervention now flow through the same automated OCR pipeline as their cleaner documents. The research continues. Every challenging image teaches something new about the gap between idealized assumptions and practical reality. But the foundation is solid: understand the visual structure first, prepare the image accordingly, and then extract with precision. That's the difference between OCR systems that fail when reality intrudes and document AI systems that adapt to meet it.